Inside p1bot: A Vishing Platform Weaponizing ElevenLabs

An analysis of p1bot.io, a subscription vishing-as-a-service platform with ElevenLabs text-to-speech hardcoded directly into its attack workflow.

Commercial AI Has a Vishing Problem

The threat intelligence community has been sounding the alarm on AI-powered social engineering for over a year. OpenAI's quarterly disruption reports have documented threat actors using LLMs to craft phishing lures, generate fake resumes, and scale influence operations. Google's Mandiant team published research in 2024 showing how AI-powered voice spoofing has been incorporated into red team operations, demonstrating just how convincing synthetic voices have become. Academic researchers have even built proof-of-concept vishing bots using off-the-shelf APIs (OpenAI's GPT for conversation, ElevenLabs for voice synthesis, Twilio for telephony) and demonstrated them against human subjects.

But there's a gap between research papers and the real criminal ecosystem. What does it look like when these capabilities aren't in a lab, but packaged into a product that anyone can subscribe to for $399 a month?

We found out. While analyzing the client-side code and network traffic of p1bot.io, a vishing-as-a-service platform, we discovered a fully productized attack toolkit with ElevenLabs' text-to-speech API hardcoded directly into the platform. Twenty-three ElevenLabs voice IDs ship with every account. Operators generate realistic IVR prompts in English, French, or Spanish, play them to victims mid-call, and capture whatever digits the victim presses (PINs, OTPs, account numbers) all in real time.

To our knowledge, this is one of the first documented instances of a commercial vishing platform embedding ElevenLabs as a core feature, moving beyond one-off misuse or research contexts into a subscription criminal service.

We reported our findings to the ElevenLabs security team, who responded promptly and collaborated with us to take action against the abusive accounts. Cyber defense is a team sport, and we thank them for their efforts.

The "Press 1" Scam: Why This Matters

Your phone rings. The caller ID shows your bank's number. An automated voice tells you there's been suspicious activity on your account and asks you to "press 1 to speak with a representative." You comply, and the moment you press that digit, you've handed control to a criminal sitting behind a dashboard, ready to extract your PIN, OTP, or account number one keystroke at a time.

These are "press 1" scams (sometimes called OTP bots or IVR fraud), and they've become one of the highest-volume voice phishing techniques in operation. The formula is effective because it's familiar: spoof a trusted caller ID, play a convincing automated prompt, and capture whatever digits the victim enters. The victim never speaks to a human. They think they're navigating a legitimate phone tree.

What makes this threat category especially dangerous now is the convergence of three trends: caller ID spoofing is trivial, AI-generated voices are indistinguishable from real ones, and platforms like p1bot have industrialized the entire workflow into a point-and-click SaaS product. No technical expertise or native voice speakers required. Just a crypto wallet and a Telegram account.

What p1bot Does

At its core, p1bot is a browser-based softphone designed for social engineering. It lets operators:

- Spoof any caller ID when placing outbound calls

- Generate realistic AI voice prompts using ElevenLabs TTS (23 voice options across English, French, and Spanish)

- Place calls directly from the browser via WebRTC

- Capture DTMF tones (the digits a victim presses) in real-time

- Record calls for later review

- Play pre-generated audio clips mid-call to simulate automated IVR systems

The platform targets victims in the US, Canada, and UK, and charges $399/month payable exclusively in Bitcoin, Litecoin, or Monero through OxaPay.

Inside the Dashboard

The p1bot interface is a dark-themed web application that puts the entire attack workflow within a few clicks.

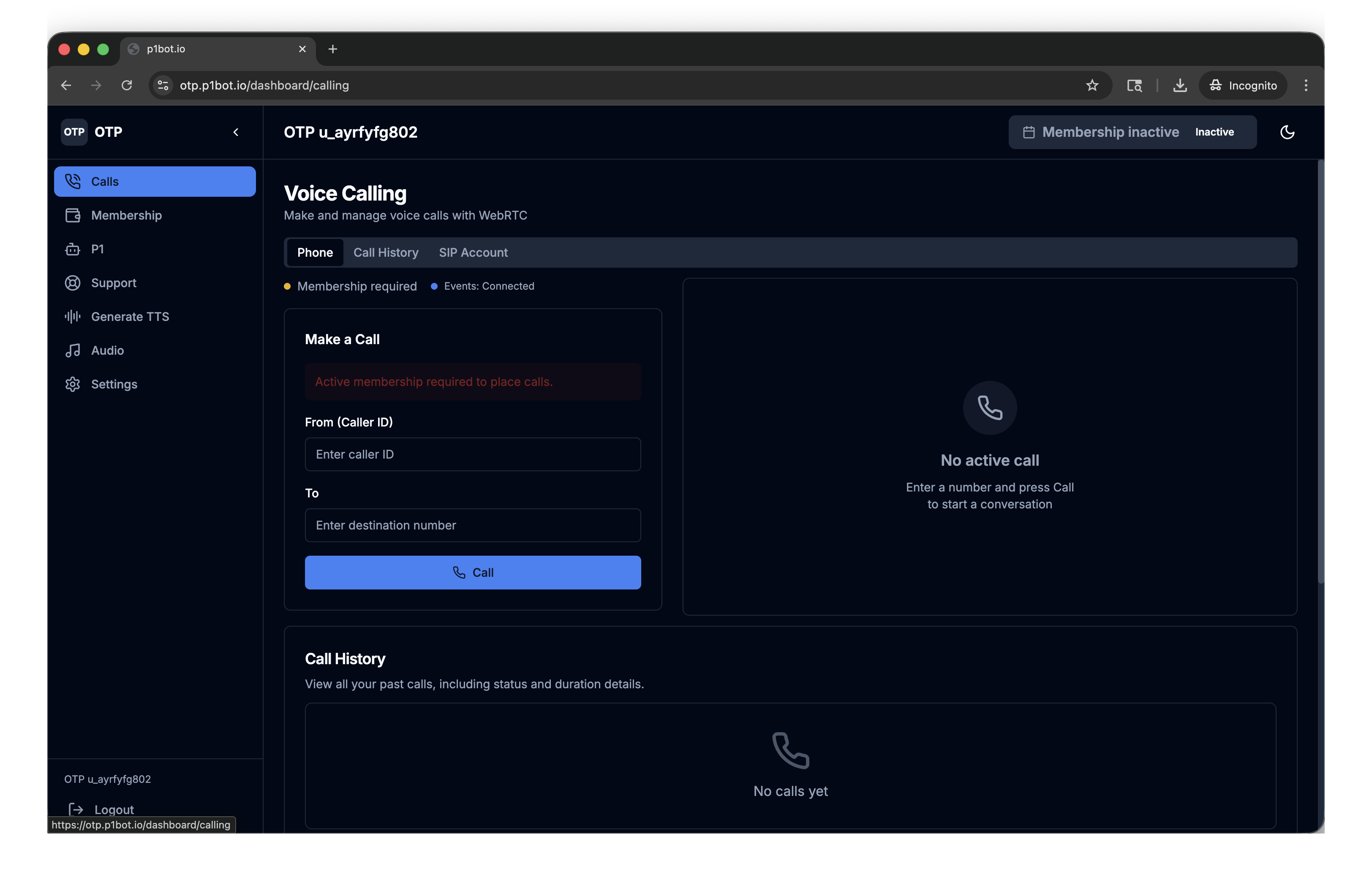

The Softphone

The Voice Calling page exposes the core of the operation: a "From (Caller ID)" field where operators enter any spoofed number, a "To" field for the victim's number, and a single "Call" button. The Call History tab logs every outbound call with status and duration. Note the "Events: Connected" indicator, which is the live Socket.io connection feeding captured DTMF digits back to the dashboard in real time.

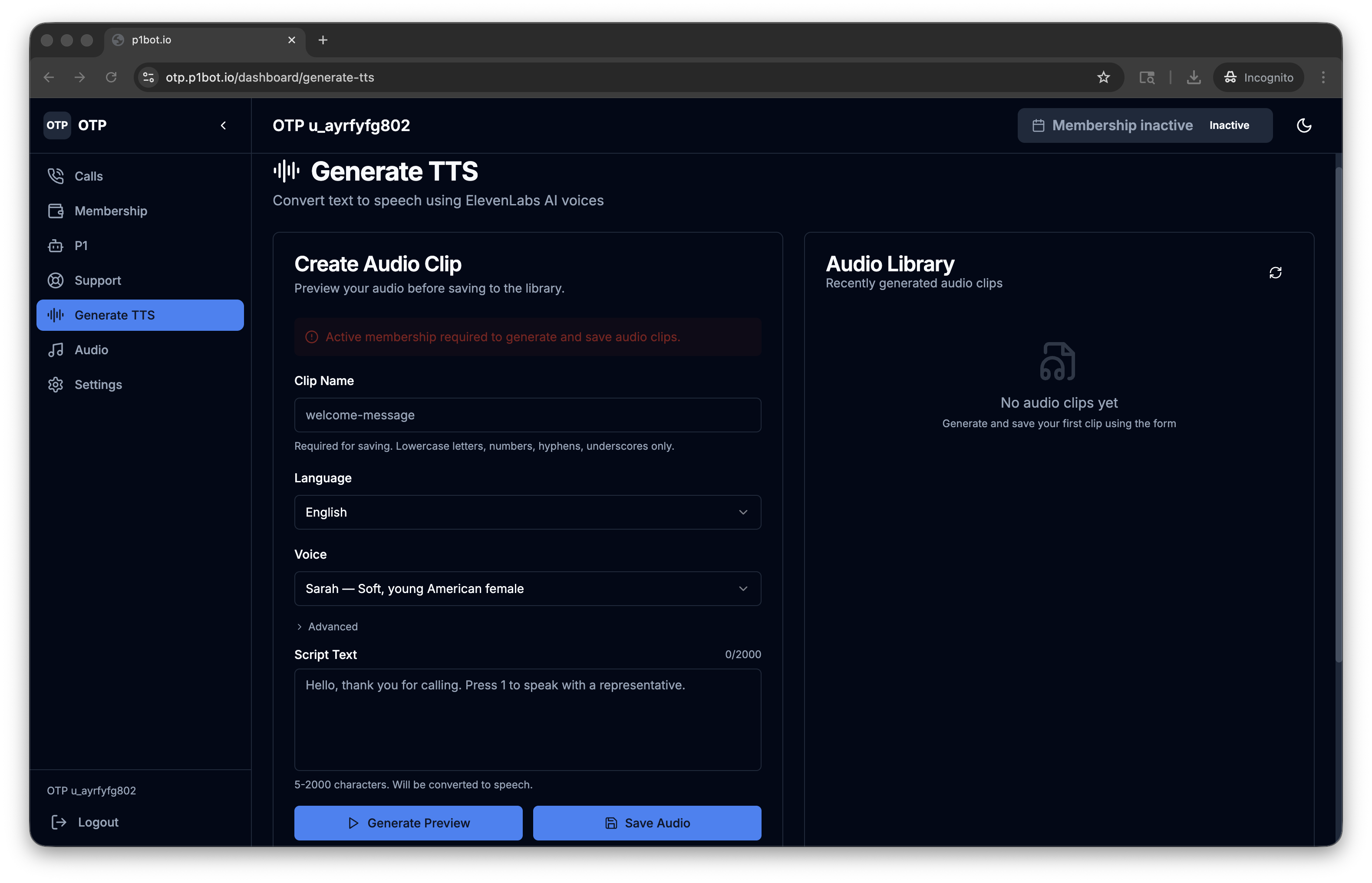

AI Voice Generation

The Generate TTS page is where operators craft their IVR lures using ElevenLabs' API. The default placeholder text, "Hello, thank you for calling. Press 1 to speak with a representative." - reveals the intended use case. Operators select from 23 voices (here, "Sarah - Soft, young American female"), generate a preview, and save clips to their library for use during live calls. This is commercial AI voice synthesis being directly weaponized for social engineering.

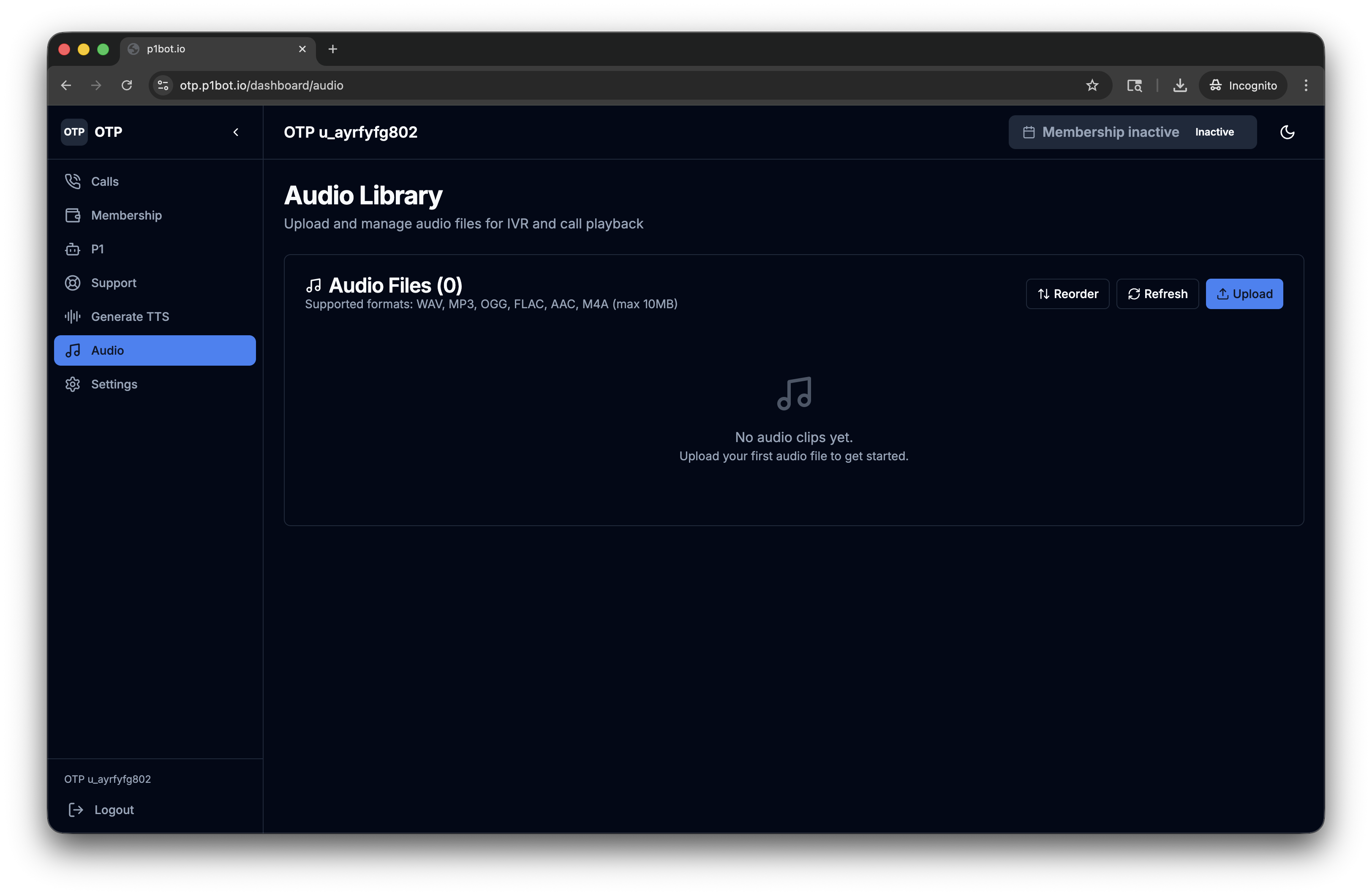

The Audio Library

The Audio Library stores generated and uploaded audio clips for on-demand playback during live calls. During a call, operators trigger clips from this library (a fake verification prompt, a "please hold" message, a custom IVR menu) while monitoring the victim's DTMF responses in real time.

How Caller ID Spoofing Works

When an operator enters a spoofed number, the client injects four SIP headers simultaneously into the outbound INVITE:

X-Caller-ID: {spoofed_number}

P-Preferred-Identity: "{spoofed_number}" <sip:{spoofed_number}@{realm}>

Remote-Party-ID: "{spoofed_number}" <sip:{spoofed_number}@{realm}>;party=calling;privacy=off;screen=no

P-Asserted-Identity: "{spoofed_number}" <sip:{spoofed_number}@{realm}>

This shotgun approach ensures the spoofed identity propagates regardless of which header downstream carriers honor.

ElevenLabs Integration: AI Voices as Attack Infrastructure

The TTS feature is where p1bot crosses from a traditional vishing tool into something more concerning. The platform doesn't just support generic text-to-speech. It ships with a hardcoded catalog of ElevenLabs voice IDs: 15 English, 4 French, and 4 Spanish voices, each mapped to a real ElevenLabs voice profile. Users can also paste custom voice IDs, meaning anyone with an ElevenLabs account can extend the platform's voice capabilities.

The default placeholder text reveals the intended use case:

"Hello, thank you for calling. Press 1 to speak with a representative."

This is the classic IVR social engineering playbook, now upgraded with AI-generated voices that sound natural, warm, and trustworthy. The old robotic TTS that might tip off a savvy victim has been replaced with voices like "Sarah - Soft, young American female" that are designed to put people at ease.

The TTS workflow is straightforward:

- Generate a clip with chosen voice and text

- Save it to the Audio Library

- During a live call, play clips on demand via the dashboard

We've seen open-source OTP bot kits on GitHub that wire together ElevenLabs and Twilio, and academic researchers demonstrated a fully automated AI vishing system (ViKing) using the same stack in a controlled study. But p1bot represents something different: a commercial, subscription-based platform where ElevenLabs is embedded as a first-class feature, not bolted on by individual operators. The integration is polished, the voice catalog is curated, and the workflow is designed to lower the barrier for anyone willing to pay.

After we shared our findings, the ElevenLabs security team moved quickly to investigate and take action. This kind of rapid, collaborative response between security researchers and AI vendors is critical, and it's exactly the model the industry needs as commercial AI tools increasingly appear in the criminal supply chain.

DTMF Capture

The platform captures victim keypad presses in real-time via Socket.io events (call:dtmf). Digits accumulate in a per-call map and display live in the dashboard. There's no automated IVR or call tree. The operator manually controls the call flow, playing audio clips and watching digits come in.

The DTMF send API supports precise timing control with beforeMs, betweenMs, durationMs, and afterMs parameters, useful for interacting with real automated systems on behalf of the operator.

The Tech Stack

Frontend

The dashboard is a Next.js 14.2.35 application using the App Router, built with:

- React 18 with shadcn/ui components (completely unmodified default Slate theme)

- Radix UI primitives, Tailwind CSS, Lucide icons

- Zustand for state management, TanStack Query for server state

- Sonner for toast notifications

Backend

- Express.js origin server (confirmed via

x-powered-by: Expressheader) - Prisma ORM with CUID v1 identifiers

- Cookie-based authentication with silent token refresh

- Socket.io for real-time events (call status, DTMF capture)

- Sits behind Cloudflare (CDN, DNS, registrar, and likely Tunnel)

VoIP Infrastructure

- Self-hosted Asterisk PBX running in a VM at

192.168.64.3:8088 - The backend proxies WebSocket connections to Asterisk through

localhost:4001/asterisk/ws - JsSIP library handles SIP registration and WebRTC call setup in the browser

- Google STUN servers (

stun.l.google.com:19302,stun1.l.google.com:19302) for NAT traversal - A console.log reference to an "Anveo bridge" strongly suggests Anveo Direct as the SIP trunk provider connecting to the PSTN

Payment and Registration

- Registration: Via Telegram bot (

@p1botstagebotin the staging environment we captured). Usernames follow the patternu_{random}with@telegram.localemail suffixes. - Pricing: $399/month (

MONTHLY_399plan) - Payment: Cryptocurrency only via OxaPay gateway: BTC (Bitcoin Mainnet), LTC (Litecoin Mainnet), XMR (Monero Mainnet)

- Number provisioning: The platform offers phone number purchasing through its own API (

/phone-numbers/available,/phone-numbers)

The 60+ Endpoint API Surface

The client-side code reveals a comprehensive REST API with over 60 endpoints spanning:

- Auth: Login, logout, refresh, API key management

- Calls: Create, list, delete, record, play audio, send DTMF, debug info

- SIP: Credential provisioning, rotation, external trunk configuration

- TTS: Voice listing, generation, preview, save

- Audio: Upload (WAV/MP3/OGG/FLAC/AAC/M4A, 10MB max), library management, streaming

- Membership: Checkout, payment status polling, recheck

- P1 (Top-up): Summary, region selection, link requests, crypto checkout

- Phone Numbers: Search available, purchase, list, delete

- Wallet: Balance, transactions

- Support: Ticket system with replies

- Webhooks: CRUD with secret rotation

- Organizations: Current org info and stats

- Messages: Listing and retrieval

Infrastructure and OPSEC

The operator has taken some care to obscure the origin infrastructure:

- Cloudflare everything: Domain registered through Cloudflare Registrar (2026-01-24), DNS through Cloudflare, CDN proxying all traffic. Likely using Cloudflare Tunnel, as no origin IP is exposed via Shodan or Censys.

- No origin leak: All IPs in the HAR resolve to Cloudflare edge nodes. The Asterisk PBX sits on a private RFC 1918 address (

192.168.64.3), unreachable from the internet. - Separate certificates: A Sectigo origin certificate (serial

d83df698393d70fa78d5a6793131df2d) exists alongside the Cloudflare edge certificate (Google Trust Services), confirming a distinct origin server behind the proxy.

However, the client-side code leaks significant intelligence:

- The full API surface and business logic

- Internal infrastructure details (Asterisk IP, proxy port, SIP configuration)

- Third-party service integrations (ElevenLabs voice IDs, OxaPay provider check, Anveo reference)

- Debug logging that was never stripped for production

Vibecoded?

Strong indicators suggest the platform was built using AI-assisted coding (likely Claude via Cursor or a similar tool):

- 139 emoji-prefixed console.log statements throughout the codebase

- 132 box-drawing character debug blocks (a signature pattern of Claude-generated code)

- Unmodified default shadcn/ui Slate theme where every CSS variable matches the defaults exactly

- Overly verbose error messages that read like AI-generated diagnostic guides

The developer appears to have prompted an LLM to build the application and shipped it without cleaning up the debug output, which is precisely why we can extract so much intelligence from the client-side code. There's an irony here: AI was used to build the platform, AI powers its voice generation, and the AI-generated artifacts are what allowed us to tear it apart.

Intelligence Summary

| Indicator | Value |

|---|---|

| Domain | p1bot.io / otp.p1bot.io |

| Registered | 2026-01-24 via Cloudflare Registrar |

| Framework | Next.js 14.2.35, Express.js backend |

| PBX | Asterisk @ 192.168.64.3:8088 |

| SIP Trunk | Likely Anveo Direct |

| TTS Provider | ElevenLabs (23 voices hardcoded) |

| Payment Gateway | OxaPay (BTC/LTC/XMR) |

| Pricing | $399/month |

| Registration | Telegram bot |

| Target Regions | US, Canada, UK |

| Origin Cert | Sectigo, serial d83df698393d70fa78d5a6793131df2d |

| Cloudflare Edge IPs | 2606:4700:3032::ac43:8773, 2606:4700:3030::6815:6ea |

The Bigger Picture: AI in the Criminal Supply Chain

p1bot isn't an anomaly. It's a signal. The same commercial AI services that enable legitimate products are being absorbed into criminal infrastructure. OpenAI's threat reports document LLMs being used to scale social engineering. Google's Mandiant team has demonstrated AI voice cloning in offensive operations. And now we're seeing ElevenLabs' voices integrated directly into a subscription vishing platform.

The pattern is consistent across every major AI vendor's threat reporting: attackers aren't developing novel AI capabilities. They're subscribing to the same commercial services as everyone else and bolting them onto established criminal playbooks. The barrier to entry drops with every new API.

This is why vendor collaboration matters. When we reported our findings to ElevenLabs, their security team responded quickly, investigated the abusive accounts, and took action. That kind of responsiveness, combined with the traceability features ElevenLabs has built into their platform, demonstrates that disruption is possible when researchers and vendors work together.

This research was conducted by the Mirage Security team. If you're interested in how your organization would fare against AI-powered vishing, smishing, and social engineering simulations, get in touch.